Apache Spark: MapPartitions — A Powerful Narrow Data Transformation

MapPartitions is a powerful transformation available in Spark which programmers would definitely like. It gives them the flexibility to process partitions as a whole by writing custom logic on lines of single-threaded programming. This story today highlights the key benefits of MapPartitions

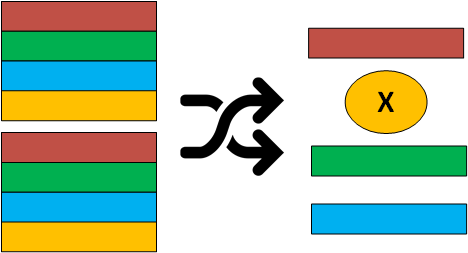

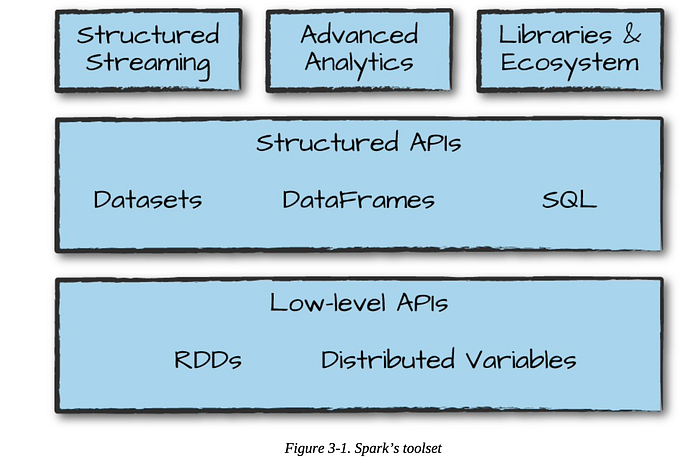

Apache Spark, on a high level, provides two types of data transformation for use in data analytics programs, the Narrow ones, and the Wide ones. Narrow ones compute a data partition of a Spark Dataset/RDD only from a single partition of a parent Spark Dataset/RDD, while the wide ones compute a data partition of a Spark Dataset/RDD from multiple partitions of a parent Spark Dataset/RDD.

Considering the Narrow transformations, Apache Spark provides a variety of such transformations to the user, such as map, maptoPair, flatMap, flatMaptoPair, filter, etc. Among all of these narrow transformations, mapPartitions is the most powerful and comprehensive data transformation available to the user. This particular transformation, if used judiciously, can speed up the performance and efficiency of the underlying Spark Job manifold.

mapPartitions transformation is applied to each partition of the Spark Dataset/RDD as opposed to most of the available narrow transformations which work on each element of the Spark Dataset/RDD partition. mapPartitions takes an iterator to the data collection in a partition and returns an iterator to a new data collection. The size could of the input and output data collection differ.

mapPartitions provide 7 key benefits which are listed below:

- Low processing overhead: For data processing doable via map, flatMap or filter transformations, one can always opt for mapPartitions given the fact that the underlying data transformations are light on memory demand. This is due to the fact that transformations, such as map, flatMap, etc. involve overhead of invoking a function call for each of the elements of data collection residing in a partition. On the contrary, mapPartitions incurs only one function invocation overhead for the data collection residing in a data partition.

- Combined Processing Opportunity: mapPartitions also provide the opportunity to combine map/flatMap operation with a filter operation. This combination would yield in higher efficiency of Spark Job since the overhead of setting up and managing multiple data transformation steps could be avoided.

- Efficient Local Aggregation: Since mapPartitions works on the partition level, it gives the opportunity to the user to do aggregation at a partition level. This local aggregation becomes very important in aggregation (reduce) operations of large data sets because it greatly reduces the amount of shuffled data (to be managed). However, the amount of reduction varies from dataset to dataset. In data sets where each data partitions carry multiple entries against the aggregation key(s), there is a significant reduction in the amount of shuffled data due to local aggregation. It is evident that the reduction in shuffle data results in an improvement in efficiency and reliability of reducing operations.

- Global Aggregation Opportunity: Similar to the local aggregation opportunity, mapPartitions could provide the user to do an efficient global aggregation by completely avoiding the data shuffling operation. However, this opportunity is available to the user only in cases where the data is partitioned in such a way that all the data records (to be aggregated) against an aggregation key resides in a single partition.

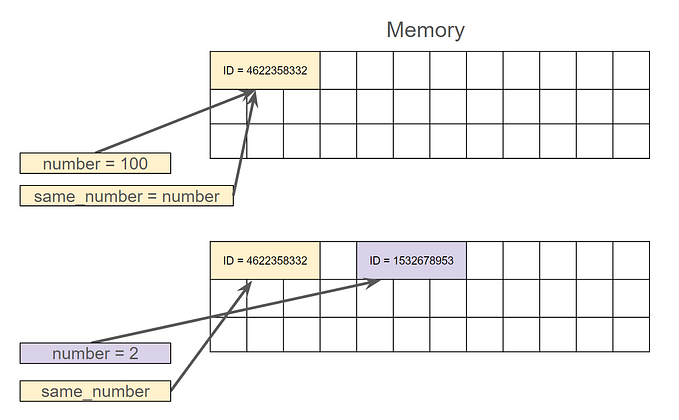

- Avoidance of Repetitive Heavy Initialization: mapPartitions also comes to rescue in cases where a similar heavy initialization is must before the processing of each data record residing in a data partition. A usual narrow transformation like map and flatMap in such cases becomes very inefficient since they incur a major overhead of repetitive initialization/de-initialization. However, in the case of mapPartitions usage, this heavy initialization would be executed only once and would suffice for all the data records residing in a partition. An example of a heavy initialization could be the initialization of a DB connection to update/insert a record.

- Avoidance of Explicit Filtering Step: Since mapPartitions (in comparison to usual map and flatMap transformation) could return a data collection with a size different than the size of the input data collection (residing on a partition), users can benefit from the avoidance of a subsequent explicit filtering step required to remove the unwanted records/null values that get inserted in the collection in the usual map/flatMap transformation.

- Stateful Partition Wise Processing: mapPartitions also provides the power to process a data partition with respect to state/correlation that is local to that partition only. In some cases, we require to process a partition as a whole because processing is dependent on some correlation or state shared between various data records of that partition. In such rare cases, only mapPartitions can rescue the user.

Get a copy of my recently published book on Spark Partitioning: https://www.amazon.com/dp/B08KJCT3XN/